diffray vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

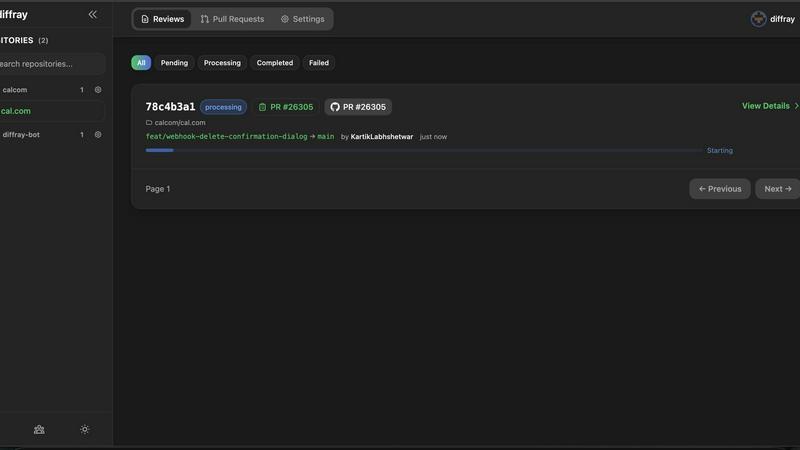

diffray

Diffray uses 30 AI agents to catch real bugs in your code, not just nitpicks.

Last updated: February 28, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

diffray

OpenMark AI

Overview

About diffray

diffray is the non-negotiable, multi-agent AI code review platform engineered to eliminate the crippling noise and ineffectiveness of traditional single-model tools. For development teams who are serious about code quality, security, and shipping velocity, diffray is an absolute necessity. It fundamentally transforms the code review process by deploying a dedicated team of over 30 specialized AI agents, each an expert in a critical domain like security vulnerabilities, performance bottlenecks, bug patterns, and data consistency. This architectural shift moves beyond generic, speculative feedback to deliver precise, actionable insights that developers can immediately trust and act upon. The platform's core, indispensable value is its deep codebase-aware investigation. diffray analyzes your entire repository context to understand your project's established patterns, libraries, and architectural decisions. This allows it to catch critical, context-sensitive issues that other tools completely miss—such as duplicate utilities, type drift, and non-atomic database operations—while intelligently avoiding redundant suggestions about patterns your team already uses. The result is a transformative developer experience with proven outcomes: an 87% reduction in false positives, 3x more real bugs caught, and PR review time slashed from an average of 45 minutes to just 12 minutes per week. diffray is a must-have for any engineering team, from fast-moving startups to large-scale enterprises, that demands intelligent, context-aware code review.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.