Activepieces vs LLMWise

Side-by-side comparison to help you choose the right tool.

Activepieces

Activepieces is the essential no-code platform for building customizable AI agents to automate tasks.

Last updated: March 1, 2026

Access all top AI models through one API with smart routing and pay only for what you use.

Last updated: February 28, 2026

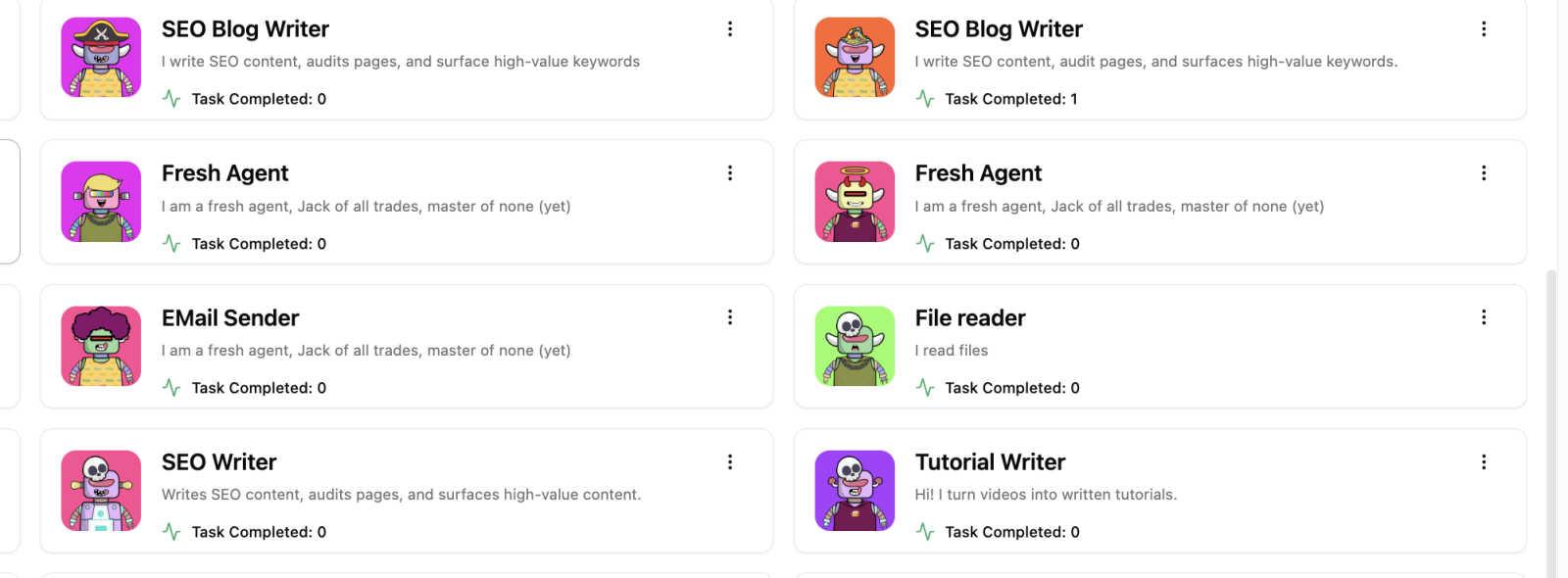

Visual Comparison

Activepieces

LLMWise

Feature Comparison

Activepieces

No-Code AI Agent Builder

The platform's foundational feature is its intuitive, visual builder that allows anyone to create sophisticated AI agents in four simple steps. You define the trigger, instruct the AI, connect the necessary tools, and deploy—all without any coding expertise. This accessibility is non-negotiable for driving widespread AI adoption across marketing, sales, support, and operations teams, turning every employee into a potential automation builder.

600+ Enterprise Integrations

Activepieces provides seamless connectivity with over 600 essential business applications, including Gmail, OpenAI, Slack, Notion, and HubSpot. This vast library is critical for building end-to-end automated workflows that span your entire tech stack. You must connect your data sources and communication tools to create agents that act on real-time information, making this integration capability a core requirement for any serious automation.

Enterprise Control & Governance

For IT and security teams, Activepieces delivers essential oversight tools without adding complexity. It features advanced Role-Based Access Control (RBAC), Single Sign-On (SSO) with SAML 2.0, SCIM provisioning, and comprehensive audit logs. This level of governance is mandatory for enterprise deployment, ensuring secure access, compliance, and precise control over who can build, edit, and run automation flows within the organization.

Flexible Deployment Options

You have the necessary choice between a fully-managed, secure cloud (SOC 2 Type II compliant) and self-hosted deployment on your own infrastructure. The cloud option offers zero maintenance, while self-hosting guarantees data stays within your network for strict compliance needs. This flexibility is vital for meeting diverse security, regulatory, and operational requirements across industries like fintech, healthcare, and legal tech.

LLMWise

Intelligent Model Routing

This is the foundational, must-have feature. You send a single prompt to the LLMWise API, and its smart routing engine automatically selects the optimal large language model for that specific task. It intelligently matches prompts to model strengths, sending coding queries to GPT, creative briefs to Claude, and translation requests to Gemini. This eliminates the guesswork and manual model selection, ensuring you consistently get the highest quality output for every request without any extra effort.

Compare, Blend, and Judge Modes

LLMWise provides essential orchestration modes that are critical for production-grade AI applications. Compare mode runs a single prompt across multiple models side-by-side in one request, allowing you to instantly benchmark speed, cost, and output quality. Blend mode takes this further by synthesizing the best parts of each model's response into one superior, consolidated answer. Judge mode enables models to evaluate and critique each other's outputs, providing an automated layer of quality assurance and validation.

Resilient Circuit-Breaker Failover

This feature is non-negotiable for any serious application. LLMWise includes a built-in circuit-breaker system that provides automatic failover to backup models if a primary provider experiences downtime or high latency. This ensures your application remains operational and resilient, never breaking due to external API outages. It is a critical component for maintaining uptime and delivering a reliable experience to your end-users without manual intervention.

Test, Benchmark, and Optimize Suite

You must have the tools to optimize performance and cost. LLMWise offers a comprehensive suite for testing and optimization, including benchmark suites, batch testing capabilities, and configurable optimization policies. You can set policies to prioritize speed, cost, or reliability for different types of requests. Automated regression checks ensure new model versions or prompts do not degrade your application's output quality, making it an indispensable tool for continuous improvement.

Use Cases

Activepieces

Automated Sales Lead Management

A sales team must instantly qualify and route incoming leads. An Activepieces AI agent can automatically trigger when a new lead arrives in a CRM or form, score the lead based on predefined criteria, enrich it with data from other tools, and instantly notify the appropriate sales rep or add the lead to a personalized nurturing sequence. This eliminates manual data entry and ensures no opportunity is missed.

Intelligent Customer Support Triage

Customer support operations require efficient ticket sorting and prioritization. An AI agent can monitor support channels (email, chat), analyze incoming requests using natural language processing, categorize tickets by urgency and type, and auto-respond to common queries or assign them to the correct support agent. This is essential for reducing response times and improving customer satisfaction.

Personalized Marketing Campaign Execution

Marketing teams need to deliver timely, relevant messages. An agent can trigger based on user behavior (e.g., website visit, cart abandonment), pull customer data from a CDP, generate personalized email or message content using AI, and send it via the connected marketing platform. This automation is crucial for executing dynamic, one-to-one marketing at scale without manual intervention.

Automated Internal Reporting & Updates

Managers and operations teams require consistent reporting without manual compilation. An AI agent can be scheduled to gather data from various sources (analytics, databases, project tools), synthesize the information into a structured report or summary using AI, and distribute it via Slack or email to stakeholders at a set time. This is a necessary workflow to ensure everyone has updated insights, saving countless hours.

LLMWise

Development and Prototyping

Developers can rapidly prototype and build AI features using the 30 permanently free models available at zero cost. This allows teams to test ideas, validate prompts, and ship initial versions of their application without any financial commitment. The compare mode is essential for debugging and determining which model handles specific edge cases or instructions most effectively during the development phase.

Production Application Orchestration

For applications in production, LLMWise is a necessity for managing AI workloads reliably and cost-effectively. The smart routing ensures every user query is handled by the best-suited model, while the failover system guarantees uptime. Companies can implement optimization policies to balance response speed and cost across millions of requests, ensuring a scalable and efficient AI backend through a single, simple API integration.

AI Output Quality Enhancement

Teams that require the highest possible quality output must use the Blend and Judge modes. This is critical for generating marketing copy, legal document analysis, complex research summaries, or competitive intelligence reports. By leveraging multiple top-tier models and synthesizing their strengths, you can produce results that surpass the capability of any single provider, turning AI from a tool into a competitive advantage.

Cost Optimization and Vendor Management

LLMWise is essential for finance-conscious teams tired of subscription sprawl. The platform allows you to bring your own API keys (BYOK) and pay provider prices directly, eliminating markups. Alternatively, you can use a unified credit system. This approach, combined with the ability to compare model costs per request and utilize free models for fallback, provides unprecedented visibility and control over AI expenditure, making it a mandatory financial management tool.

Overview

About Activepieces

Activepieces is the essential open-source AI Agent ecosystem that empowers every team to build and deploy intelligent, autonomous agents without writing a single line of code. It is a must-have platform for businesses seeking to eliminate repetitive work, accelerate workflows, and drive organization-wide AI adoption with complete IT governance. Designed for both non-technical users and technical teams, Activepieces transforms complex processes into simple, four-step agent creation. The core value proposition is undeniable: it provides the power to connect over 600 business tools—from Gmail and Slack to CRMs and databases—into smart agents that work independently or together. These agents handle critical tasks like lead qualification, personalized communication, and customer onboarding, delivering massive productivity gains and cost savings. With built-in components for data storage (Tables), human-in-the-loop approvals (Todos), and integration with leading AI models via Model Context Protocols (MCPs), Activepieces is the complete stack for building controllable, enterprise-grade AI systems that scale with your needs.

About LLMWise

LLMWise is the essential, unified API platform for developers and businesses that demand the best AI performance for every task without the operational nightmare. It solves the critical problem of AI provider fragmentation by giving you a single, powerful endpoint to access over 62 models from 20+ leading providers, including OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek. The core value proposition is absolute necessity: stop juggling multiple subscriptions, managing separate API keys, and guessing which model to use. LLMWise introduces intelligent orchestration, where smart routing automatically matches each prompt to the optimal model based on its specialty—code to GPT, creative writing to Claude, translation to Gemini. Beyond simple access, it provides must-have tools for comparison, blending outputs for superior quality, and ensuring resilience with automatic failover. Built for developers who prioritize performance, cost-efficiency, and reliability, LLMWise eliminates complexity and locks you into a pay-as-you-go model with no subscriptions, ensuring you only pay for what you use while maintaining complete control.

Frequently Asked Questions

Activepieces FAQ

Do I need coding skills to use Activepieces?

No, coding skills are absolutely not required. Activepieces is built as a visual, no-code platform specifically to empower non-technical users. You build AI agents by connecting visual blocks in the workflow builder, defining triggers, and configuring pre-built actions for the 600+ integrated apps. Technical users can add custom JavaScript if needed, but it is entirely optional.

How does Activepieces ensure security for enterprise use?

Security is a foundational priority. Activepieces offers enterprise-grade features including SOC 2 Type II compliance, GDPR readiness, Single Sign-On (SAML 2.0), SCIM provisioning for user management, and detailed audit logs. For the highest security requirements, you must choose the self-hosted deployment option, which keeps all data within your own private network and infrastructure.

Can I build agents that require human approval?

Yes, human-in-the-loop workflows are a core capability. Using the "Todos" feature, you can design agents that pause at a specific step and require manual approval or input from a team member before proceeding. This is essential for processes like content publishing, expense approvals, or deal reviews where human oversight is non-negotiable before an automated action is finalized.

What is the difference between cloud and self-hosted deployment?

The cloud option is a fully-managed service; Activepieces hosts and maintains everything for you, offering quick setup, automatic updates, and high availability. The self-hosted option requires you to deploy and manage the Activepieces software on your own servers or private cloud, giving you full control over data residency, network security, and compliance. The choice depends on your specific security, compliance, and infrastructure requirements.

LLMWise FAQ

How does the pricing work?

LLMWise operates on a transparent, pay-as-you-go credit system with no monthly subscriptions. You start with 20 free trial credits that never expire. After that, you only pay for what you use. Crucially, you have two options: you can use LLMWise credits, or you can Bring Your Own Keys (BYOK) from providers like OpenAI and Anthropic and pay their standard rates directly through LLMWise's dashboard. Over 30 models are also available at a permanent cost of 0 credits for testing and fallback.

What are the free models?

LLMWise provides access to over 30 models that cost 0 credits to use, permanently. This includes models from Google (Gemma 3 series), Meta (Llama series), Arcee AI, Mistral, and others. These are essential for prototyping, serving as a cost-free fallback path during traffic spikes, and for benchmarking against paid models to make informed routing decisions. The availability of these free models is automatically synced from the providers' own catalogs.

How does the smart routing work?

The smart routing feature automatically analyzes your prompt and directs it to the model best suited for the task. This routing is based on proven model specialties—for instance, code generation and complex reasoning are routed to models like GPT-4o or GPT-5.2, while creative writing and nuanced dialogue are sent to Claude Sonnet or Opus. This ensures you consistently get optimal performance without needing to be an expert on every model's specific capabilities.

Is there a risk of vendor lock-in?

No, avoiding vendor lock-in is a core principle of LLMWise. By using the platform, you are actually future-proofing your application against lock-in to any single AI provider. Your integration is with the LLMWise API. If a new, superior model is released from any provider, you can immediately access it through the same endpoint. Furthermore, the BYOK option means you maintain direct relationships with providers, and you can easily compare all alternatives side-by-side.

Alternatives

Activepieces Alternatives

Activepieces is a leading open-source, no-code platform in the AI automation category. It empowers users to build autonomous AI agents that connect to hundreds of apps, automating complex workflows without any programming. This makes it an essential tool for businesses seeking to accelerate operations and eliminate manual tasks. Users often explore alternatives for several critical reasons. These include budget constraints, the need for specific integrations not available in the current ecosystem, or requirements for different deployment models like on-premise solutions. The search is driven by the necessity to find a platform that aligns perfectly with their operational priorities and technical environment. When evaluating an alternative, focus on non-negotiable elements. Prioritize the platform's ability to connect to your essential business tools, its pricing transparency and scalability, and the overall user experience for your team. The right choice must fulfill the core necessity of creating reliable, maintainable automations that drive tangible efficiency.

LLMWise Alternatives

LLMWise is a unified API platform in the AI assistants category, designed to give developers a single access point to multiple large language models like GPT, Claude, and Gemini. It uses intelligent auto-routing to select the best model for each specific prompt, aiming to maximize performance and simplify integration. Users often explore alternatives for various reasons, including specific pricing structures, the need for different feature sets like advanced analytics or custom model support, or platform requirements such as on-premise deployment. Some may seek a different balance between control, cost, and convenience. When evaluating other solutions, key considerations include the range of supported AI models, the sophistication of routing and failover logic, transparent and flexible pricing without mandatory subscriptions, and robust tools for testing and optimizing performance across different providers.