LLMWise vs ResponseHub

Side-by-side comparison to help you choose the right tool.

LLMWise

Access all top AI models through one API with smart routing and pay only for what you use.

Last updated: February 28, 2026

ResponseHub

Automate security questionnaires with AI for fast, accurate, and compliant responses.

Last updated: February 28, 2026

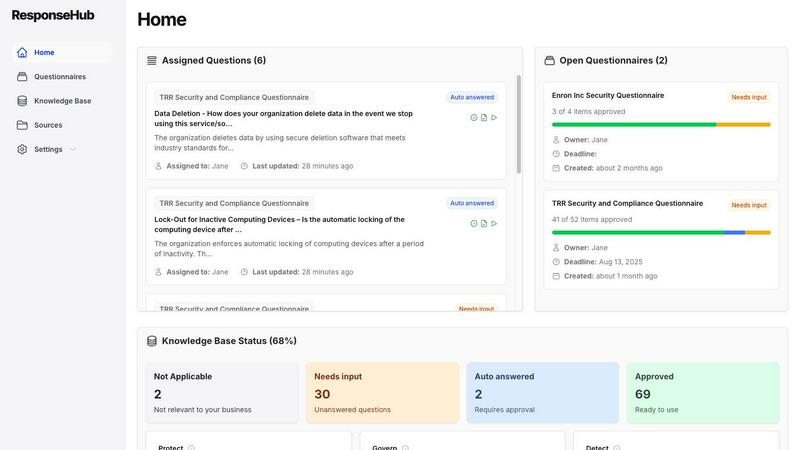

Visual Comparison

LLMWise

ResponseHub

Feature Comparison

LLMWise

Intelligent Model Routing

This is the foundational, must-have feature. You send a single prompt to the LLMWise API, and its smart routing engine automatically selects the optimal large language model for that specific task. It intelligently matches prompts to model strengths, sending coding queries to GPT, creative briefs to Claude, and translation requests to Gemini. This eliminates the guesswork and manual model selection, ensuring you consistently get the highest quality output for every request without any extra effort.

Compare, Blend, and Judge Modes

LLMWise provides essential orchestration modes that are critical for production-grade AI applications. Compare mode runs a single prompt across multiple models side-by-side in one request, allowing you to instantly benchmark speed, cost, and output quality. Blend mode takes this further by synthesizing the best parts of each model's response into one superior, consolidated answer. Judge mode enables models to evaluate and critique each other's outputs, providing an automated layer of quality assurance and validation.

Resilient Circuit-Breaker Failover

This feature is non-negotiable for any serious application. LLMWise includes a built-in circuit-breaker system that provides automatic failover to backup models if a primary provider experiences downtime or high latency. This ensures your application remains operational and resilient, never breaking due to external API outages. It is a critical component for maintaining uptime and delivering a reliable experience to your end-users without manual intervention.

Test, Benchmark, and Optimize Suite

You must have the tools to optimize performance and cost. LLMWise offers a comprehensive suite for testing and optimization, including benchmark suites, batch testing capabilities, and configurable optimization policies. You can set policies to prioritize speed, cost, or reliability for different types of requests. Automated regression checks ensure new model versions or prompts do not degrade your application's output quality, making it an indispensable tool for continuous improvement.

ResponseHub

AI-Powered Spreadsheet Parser

This feature is absolutely necessary for handling the chaotic reality of incoming questionnaires. It intelligently parses any Excel file, regardless of complex cover sheets, multiple tabs, or ambiguous column headers. The AI automatically identifies and extracts every question, saving you the hours of manual copying, pasting, and reformatting that traditionally plague this process. You can upload and get to work instantly.

Automated & Intelligent Knowledge Base

Your centralized Knowledge Base is the vital source of truth for all answers. Critically, it is not static. ResponseHub's AI automatically suggests new entries and updates based on completed questionnaires and newly uploaded source documents. This continuous learning is essential for maintaining an accurate, ever-evolving compliance posture without constant manual oversight.

Precise Answer Citations for Total Confidence

Every single answer generated by ResponseHub is directly referenced to the exact source document, policy, page, section, and sentence. This granular citation is non-negotiable for audit trails and provides the absolute confidence needed to sign off on high-stakes security information, eliminating the risk of guesswork or error in your submissions.

Collaborative Workflow & Delegation

Security questionnaires require input from multiple stakeholders. This feature allows you to efficiently assign specific questions to subject matter experts (e.g., your CTO or DevOps lead) and delegate final approvals. All changes are tracked and logged, creating a clear audit trail and ensuring accountability while streamlining internal collaboration.

Use Cases

LLMWise

Development and Prototyping

Developers can rapidly prototype and build AI features using the 30 permanently free models available at zero cost. This allows teams to test ideas, validate prompts, and ship initial versions of their application without any financial commitment. The compare mode is essential for debugging and determining which model handles specific edge cases or instructions most effectively during the development phase.

Production Application Orchestration

For applications in production, LLMWise is a necessity for managing AI workloads reliably and cost-effectively. The smart routing ensures every user query is handled by the best-suited model, while the failover system guarantees uptime. Companies can implement optimization policies to balance response speed and cost across millions of requests, ensuring a scalable and efficient AI backend through a single, simple API integration.

AI Output Quality Enhancement

Teams that require the highest possible quality output must use the Blend and Judge modes. This is critical for generating marketing copy, legal document analysis, complex research summaries, or competitive intelligence reports. By leveraging multiple top-tier models and synthesizing their strengths, you can produce results that surpass the capability of any single provider, turning AI from a tool into a competitive advantage.

Cost Optimization and Vendor Management

LLMWise is essential for finance-conscious teams tired of subscription sprawl. The platform allows you to bring your own API keys (BYOK) and pay provider prices directly, eliminating markups. Alternatively, you can use a unified credit system. This approach, combined with the ability to compare model costs per request and utilize free models for fallback, provides unprecedented visibility and control over AI expenditure, making it a mandatory financial management tool.

ResponseHub

Accelerating Enterprise Sales Cycles

For sales teams chasing large enterprise deals, a slow response to a security questionnaire can kill momentum and lose the deal. ResponseHub is critical for rapidly generating complete, confident answers, often in under a day, to keep the sales process moving forward and secure revenue without delay.

Empowering Lean Security & Compliance Teams

Small to mid-sized companies often have a single person managing all security and compliance. ResponseHub acts as a force multiplier, allowing that individual to manage a high volume of questionnaires efficiently without becoming a bottleneck, freeing them to focus on strategic security initiatives rather than administrative paperwork.

Streamlining Third-Party Vendor Risk Management

When your organization needs to assess the security of your own vendors, you must send out questionnaires. ResponseHub can be used to standardize and manage this outgoing process, ensuring you collect consistent, well-structured information from your vendors to make informed risk decisions faster.

Onboarding for Security Certifications (SOC 2, ISO 27001)

Preparing for a major audit involves answering hundreds of detailed control questions. ResponseHub is indispensable for organizing all evidence and policy references in one place. Its citation engine directly maps controls to evidence, drastically reducing preparation time and stress for the audit.

Overview

About LLMWise

LLMWise is the essential, unified API platform for developers and businesses that demand the best AI performance for every task without the operational nightmare. It solves the critical problem of AI provider fragmentation by giving you a single, powerful endpoint to access over 62 models from 20+ leading providers, including OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek. The core value proposition is absolute necessity: stop juggling multiple subscriptions, managing separate API keys, and guessing which model to use. LLMWise introduces intelligent orchestration, where smart routing automatically matches each prompt to the optimal model based on its specialty—code to GPT, creative writing to Claude, translation to Gemini. Beyond simple access, it provides must-have tools for comparison, blending outputs for superior quality, and ensuring resilience with automatic failover. Built for developers who prioritize performance, cost-efficiency, and reliability, LLMWise eliminates complexity and locks you into a pay-as-you-go model with no subscriptions, ensuring you only pay for what you use while maintaining complete control.

About ResponseHub

ResponseHub is the essential AI-powered platform that eliminates the manual burden and high-stakes risk of security questionnaires. For compliance teams, security officers, and executives, completing vendor security assessments is a non-negotiable but traditionally grueling process that consumes days of valuable time and diverts focus from core business objectives. ResponseHub transforms this necessity into a streamlined, confident, and rapid operation. By automating the parsing, answering, and citation of complex questionnaire spreadsheets, the platform cuts completion time from days to mere hours. Its core value proposition is delivering 100% confidence in every answer through precise citations to your exact policies, while its self-updating AI Knowledge Base ensures your compliance posture is always current. In an environment where inaccurate security information can lead to catastrophic reputational damage and lost deals, ResponseHub is not just a tool—it is a critical business imperative for any organization undergoing frequent security reviews.

Frequently Asked Questions

LLMWise FAQ

How does the pricing work?

LLMWise operates on a transparent, pay-as-you-go credit system with no monthly subscriptions. You start with 20 free trial credits that never expire. After that, you only pay for what you use. Crucially, you have two options: you can use LLMWise credits, or you can Bring Your Own Keys (BYOK) from providers like OpenAI and Anthropic and pay their standard rates directly through LLMWise's dashboard. Over 30 models are also available at a permanent cost of 0 credits for testing and fallback.

What are the free models?

LLMWise provides access to over 30 models that cost 0 credits to use, permanently. This includes models from Google (Gemma 3 series), Meta (Llama series), Arcee AI, Mistral, and others. These are essential for prototyping, serving as a cost-free fallback path during traffic spikes, and for benchmarking against paid models to make informed routing decisions. The availability of these free models is automatically synced from the providers' own catalogs.

How does the smart routing work?

The smart routing feature automatically analyzes your prompt and directs it to the model best suited for the task. This routing is based on proven model specialties—for instance, code generation and complex reasoning are routed to models like GPT-4o or GPT-5.2, while creative writing and nuanced dialogue are sent to Claude Sonnet or Opus. This ensures you consistently get optimal performance without needing to be an expert on every model's specific capabilities.

Is there a risk of vendor lock-in?

No, avoiding vendor lock-in is a core principle of LLMWise. By using the platform, you are actually future-proofing your application against lock-in to any single AI provider. Your integration is with the LLMWise API. If a new, superior model is released from any provider, you can immediately access it through the same endpoint. Furthermore, the BYOK option means you maintain direct relationships with providers, and you can easily compare all alternatives side-by-side.

ResponseHub FAQ

How does ResponseHub ensure the accuracy of its AI-generated answers?

Accuracy is paramount. ResponseHub does not generate answers from a generic database. It exclusively uses your own uploaded source documents (policies, SOPs) and your curated Knowledge Base. The AI finds the most relevant information from your trusted sources, and every answer includes a precise citation so you can verify it instantly. You maintain complete control and oversight.

What if I don't have formal security policies to upload?

This is a common starting point. ResponseHub includes a free policy generator to help you create essential security documents in minutes. You can also start by importing an existing informal knowledge base from tools like Notion or Google Sheets, or generate a foundational one based on standards like the NIST Cybersecurity Framework.

Can ResponseHub handle any security questionnaire format?

Yes, this is a core strength. The AI-powered parser is specifically designed to handle the messy reality of real-world questionnaires. It accurately extracts questions from any Excel spreadsheet, regardless of complex formatting, multiple sheets, merged cells, or unusual layouts, eliminating the manual "spreadsheet hell" of reformatting.

Is my sensitive data secure within the ResponseHub platform?

Data security is a fundamental necessity. ResponseHub is built with enterprise-grade security practices. You retain full ownership of your data. The platform employs robust encryption both in transit and at rest. You can contact the team for a detailed security whitepaper and to discuss specific compliance requirements like SOC 2.

Alternatives

LLMWise Alternatives

LLMWise is a unified API platform in the AI assistants category, designed to give developers a single access point to multiple large language models like GPT, Claude, and Gemini. It uses intelligent auto-routing to select the best model for each specific prompt, aiming to maximize performance and simplify integration. Users often explore alternatives for various reasons, including specific pricing structures, the need for different feature sets like advanced analytics or custom model support, or platform requirements such as on-premise deployment. Some may seek a different balance between control, cost, and convenience. When evaluating other solutions, key considerations include the range of supported AI models, the sophistication of routing and failover logic, transparent and flexible pricing without mandatory subscriptions, and robust tools for testing and optimizing performance across different providers.

ResponseHub Alternatives

ResponseHub is an AI-powered security questionnaire automation platform. It belongs to the category of AI assistants designed to streamline vendor security assessments and compliance workflows. By automating the parsing and answering of complex questionnaires, it drastically reduces manual effort and turnaround time. Users often explore alternatives for various reasons. These can include budget constraints, the need for specific integrations with their existing tech stack, or a requirement for different feature sets like custom reporting or team collaboration tools. The search for the right tool is driven by finding the optimal fit for an organization's unique processes and scale. When evaluating an alternative, prioritize solutions that directly address your core pain points. Key considerations should include the accuracy and intelligence of the AI engine, the platform's ability to handle your specific document formats and questionnaire complexities, and the robustness of its knowledge management system. Security and data handling protocols are also non-negotiable criteria for any platform managing sensitive compliance information.