Kane AI vs LLMWise

Side-by-side comparison to help you choose the right tool.

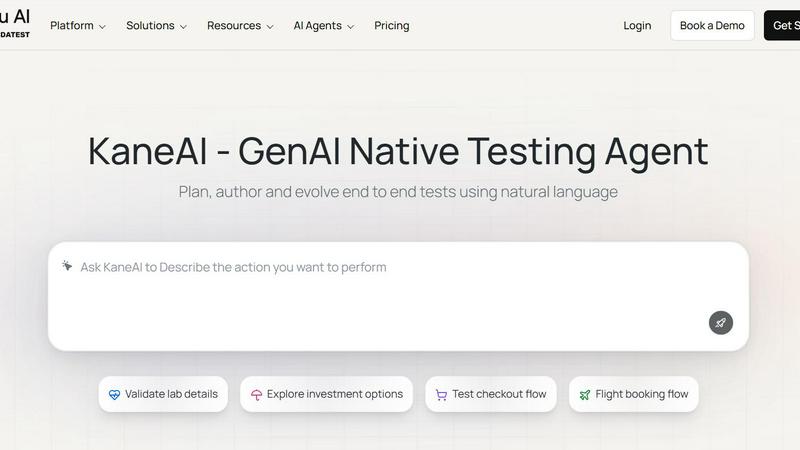

Kane AI

KaneAI is your essential AI testing agent that creates and evolves tests using plain English.

Last updated: February 28, 2026

LLMWise

Access all top AI models through one API with smart routing and pay only for what you use.

Last updated: February 28, 2026

Visual Comparison

Kane AI

LLMWise

Feature Comparison

Kane AI

Natural Language Test Authoring

This is a foundational feature that eliminates the need for manual coding. Teams can simply converse with Kane AI, describing test objectives, steps, or complex conditional logic in plain English. The agent interprets these instructions and generates detailed, executable test cases automatically, making test creation accessible to both technical and non-technical team members and dramatically speeding up the authoring process.

Intelligent Test Planner & Scenario Generation

Kane AI can ingest high-level requirements from various sources like JIRA tickets, PRDs, PDFs, images, or even audio to automatically create structured test plans and scenarios. This ensures test strategies are directly aligned with business goals from the outset. The Human-in-the-Loop approval process allows teams to review and approve AI-generated plans before execution, maintaining necessary control and intent.

Unified Multi-Layer Testing

This is a critical capability for comprehensive quality assurance. Kane AI enables testing across every layer of an application in one seamless workflow. Teams can validate UI flows, check API responses and payloads, run database queries, and conduct accessibility audits simultaneously, eliminating coverage gaps and testing silos that are common with disparate tools.

GenAI-Powered Execution & Healing

Kane AI executes tests across 3000+ browser, OS, and device combinations. During execution, it employs auto bug detection and GenAI-powered self-healing to intelligently adapt to minor UI changes, automatically dismissing pop-ups and maintaining test flow. This creates resilient test suites that require less maintenance and provide reliable results, which is essential for continuous testing pipelines.

LLMWise

Intelligent Model Routing

This is the foundational, must-have feature. You send a single prompt to the LLMWise API, and its smart routing engine automatically selects the optimal large language model for that specific task. It intelligently matches prompts to model strengths, sending coding queries to GPT, creative briefs to Claude, and translation requests to Gemini. This eliminates the guesswork and manual model selection, ensuring you consistently get the highest quality output for every request without any extra effort.

Compare, Blend, and Judge Modes

LLMWise provides essential orchestration modes that are critical for production-grade AI applications. Compare mode runs a single prompt across multiple models side-by-side in one request, allowing you to instantly benchmark speed, cost, and output quality. Blend mode takes this further by synthesizing the best parts of each model's response into one superior, consolidated answer. Judge mode enables models to evaluate and critique each other's outputs, providing an automated layer of quality assurance and validation.

Resilient Circuit-Breaker Failover

This feature is non-negotiable for any serious application. LLMWise includes a built-in circuit-breaker system that provides automatic failover to backup models if a primary provider experiences downtime or high latency. This ensures your application remains operational and resilient, never breaking due to external API outages. It is a critical component for maintaining uptime and delivering a reliable experience to your end-users without manual intervention.

Test, Benchmark, and Optimize Suite

You must have the tools to optimize performance and cost. LLMWise offers a comprehensive suite for testing and optimization, including benchmark suites, batch testing capabilities, and configurable optimization policies. You can set policies to prioritize speed, cost, or reliability for different types of requests. Automated regression checks ensure new model versions or prompts do not degrade your application's output quality, making it an indispensable tool for continuous improvement.

Use Cases

Kane AI

Accelerating Test Automation for Agile/DevOps Teams

For teams practicing Agile or DevOps, speed is non-negotiable. Kane AI allows developers and QA engineers to generate and execute automated tests directly from user stories or bug tickets in natural language. This integrates testing into the CI/CD pipeline seamlessly, enabling rapid feedback and continuous delivery without bottlenecking the development process with slow, manual test creation.

Achieving Comprehensive API and Backend Validation

Ensuring backend services are robust is essential. Kane AI's smarter API testing allows teams to design and validate API workflows alongside UI tests in a unified strategy. With real-time network checks for status codes and payloads, teams can ensure data integrity and service reliability, providing full-stack coverage that is often missed by front-end-only testing tools.

Enabling Enterprise Test Management at Scale

Large organizations with complex tech stacks and compliance needs require a scalable, secure solution. Kane AI's enterprise-ready architecture with SSO, RBAC, and audit logs, combined with its ability to create modular, reusable test components, allows for centralized test management across multiple projects and teams, ensuring consistency, security, and governance at scale.

Simplifying Cross-Browser and Cross-Device Testing

Delivering a consistent user experience across all platforms is a mandatory requirement. Kane AI's integration with Hyperexecute allows teams to effortlessly schedule and run their AI-generated tests across a massive grid of 3000+ real browsers, operating systems, and real mobile devices, ensuring pixel-perfect validation and functional reliability for every user.

LLMWise

Development and Prototyping

Developers can rapidly prototype and build AI features using the 30 permanently free models available at zero cost. This allows teams to test ideas, validate prompts, and ship initial versions of their application without any financial commitment. The compare mode is essential for debugging and determining which model handles specific edge cases or instructions most effectively during the development phase.

Production Application Orchestration

For applications in production, LLMWise is a necessity for managing AI workloads reliably and cost-effectively. The smart routing ensures every user query is handled by the best-suited model, while the failover system guarantees uptime. Companies can implement optimization policies to balance response speed and cost across millions of requests, ensuring a scalable and efficient AI backend through a single, simple API integration.

AI Output Quality Enhancement

Teams that require the highest possible quality output must use the Blend and Judge modes. This is critical for generating marketing copy, legal document analysis, complex research summaries, or competitive intelligence reports. By leveraging multiple top-tier models and synthesizing their strengths, you can produce results that surpass the capability of any single provider, turning AI from a tool into a competitive advantage.

Cost Optimization and Vendor Management

LLMWise is essential for finance-conscious teams tired of subscription sprawl. The platform allows you to bring your own API keys (BYOK) and pay provider prices directly, eliminating markups. Alternatively, you can use a unified credit system. This approach, combined with the ability to compare model costs per request and utilize free models for fallback, provides unprecedented visibility and control over AI expenditure, making it a mandatory financial management tool.

Overview

About Kane AI

Kane AI is a first-of-its-kind, GenAI-native testing agent engineered for high-speed Quality Engineering teams. It is an essential platform that fundamentally transforms test automation by allowing teams to plan, author, manage, debug, and evolve end-to-end tests using simple natural language. This drastically reduces the traditional barriers of time and deep technical expertise required to start and scale automation efforts. Built to handle complex, real-world workflows, Kane AI supports all major programming languages and frameworks without the performance compromises of legacy low-code tools. Its core value proposition is enabling reliable, continuous software delivery at speed by unifying testing for databases, APIs, accessibility, and UI into a single, intelligent flow. With enterprise-ready features like SSO, RBAC, and seamless integrations with tools like Jira, it is a necessary solution for modern development teams seeking to improve coverage, streamline execution, and accelerate release cycles with AI-powered precision.

About LLMWise

LLMWise is the essential, unified API platform for developers and businesses that demand the best AI performance for every task without the operational nightmare. It solves the critical problem of AI provider fragmentation by giving you a single, powerful endpoint to access over 62 models from 20+ leading providers, including OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek. The core value proposition is absolute necessity: stop juggling multiple subscriptions, managing separate API keys, and guessing which model to use. LLMWise introduces intelligent orchestration, where smart routing automatically matches each prompt to the optimal model based on its specialty—code to GPT, creative writing to Claude, translation to Gemini. Beyond simple access, it provides must-have tools for comparison, blending outputs for superior quality, and ensuring resilience with automatic failover. Built for developers who prioritize performance, cost-efficiency, and reliability, LLMWise eliminates complexity and locks you into a pay-as-you-go model with no subscriptions, ensuring you only pay for what you use while maintaining complete control.

Frequently Asked Questions

Kane AI FAQ

How does Kane AI differ from traditional low-code testing tools?

Kane AI is fundamentally different as it is a GenAI-native agent, not just a record-and-playback or drag-and-drop tool. While low-code tools simplify scripting, they often struggle with complex logic and maintenance. Kane AI understands intent through natural language, generates intelligent test plans, handles sophisticated conditionals, and offers self-healing capabilities. It is built for complex, multi-layer testing across any framework without the performance trade-offs typical of traditional tools.

Can Kane AI integrate with our existing development workflow?

Absolutely. Seamless integration is a core strength. Kane AI offers native integrations with Jira and Azure DevOps, allowing teams to create test cases, trigger automation runs, and raise bug tickets directly within their project management tools. This keeps the entire testing workflow embedded within the existing development lifecycle without requiring context switching or extra effort.

What kind of tests can I author with natural language?

You can author a wide range of end-to-end test scenarios. This includes complex UI flows for web and mobile applications (like checkout processes or flight bookings), API testing sequences, database validation tests, and accessibility checks. You can describe high-level objectives, specific step-by-step interactions, data-driven scenarios, and conditional assertions, all in plain English.

Is Kane AI suitable for non-technical team members like product managers or business analysts?

Yes, it is specifically designed to be accessible. Product managers and business analysts can use Kane AI to translate product requirements, PRDs, or acceptance criteria directly into structured test cases using natural language. This empowers them to contribute directly to the quality process, ensuring the software aligns with business intent from the earliest stages.

LLMWise FAQ

How does the pricing work?

LLMWise operates on a transparent, pay-as-you-go credit system with no monthly subscriptions. You start with 20 free trial credits that never expire. After that, you only pay for what you use. Crucially, you have two options: you can use LLMWise credits, or you can Bring Your Own Keys (BYOK) from providers like OpenAI and Anthropic and pay their standard rates directly through LLMWise's dashboard. Over 30 models are also available at a permanent cost of 0 credits for testing and fallback.

What are the free models?

LLMWise provides access to over 30 models that cost 0 credits to use, permanently. This includes models from Google (Gemma 3 series), Meta (Llama series), Arcee AI, Mistral, and others. These are essential for prototyping, serving as a cost-free fallback path during traffic spikes, and for benchmarking against paid models to make informed routing decisions. The availability of these free models is automatically synced from the providers' own catalogs.

How does the smart routing work?

The smart routing feature automatically analyzes your prompt and directs it to the model best suited for the task. This routing is based on proven model specialties—for instance, code generation and complex reasoning are routed to models like GPT-4o or GPT-5.2, while creative writing and nuanced dialogue are sent to Claude Sonnet or Opus. This ensures you consistently get optimal performance without needing to be an expert on every model's specific capabilities.

Is there a risk of vendor lock-in?

No, avoiding vendor lock-in is a core principle of LLMWise. By using the platform, you are actually future-proofing your application against lock-in to any single AI provider. Your integration is with the LLMWise API. If a new, superior model is released from any provider, you can immediately access it through the same endpoint. Furthermore, the BYOK option means you maintain direct relationships with providers, and you can easily compare all alternatives side-by-side.

Alternatives

Kane AI Alternatives

Kane AI is a GenAI-native testing agent that automates the planning, creation, and evolution of software tests using natural language. It belongs to the category of AI assistants for quality engineering, designed to accelerate test automation for development teams. Users often explore alternatives for various reasons, including budget constraints, specific feature requirements like integration with niche tools, or a need for a different deployment model such as on-premise versus cloud. When evaluating other solutions, it's crucial to assess core capabilities against your team's workflow. Key considerations include the tool's ability to handle complex, multi-language test automation, the depth of its AI for intelligent test generation and healing, and the robustness of its integrations with existing DevOps and project management ecosystems. The ideal alternative should demonstrably reduce manual effort while scaling with your application's complexity.

LLMWise Alternatives

LLMWise is a unified API platform in the AI assistants category, designed to give developers a single access point to multiple large language models like GPT, Claude, and Gemini. It uses intelligent auto-routing to select the best model for each specific prompt, aiming to maximize performance and simplify integration. Users often explore alternatives for various reasons, including specific pricing structures, the need for different feature sets like advanced analytics or custom model support, or platform requirements such as on-premise deployment. Some may seek a different balance between control, cost, and convenience. When evaluating other solutions, key considerations include the range of supported AI models, the sophistication of routing and failover logic, transparent and flexible pricing without mandatory subscriptions, and robust tools for testing and optimizing performance across different providers.